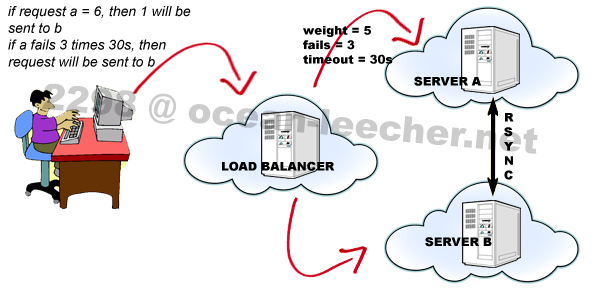

On the previous post we were talking about simple dns failover using two nameservers / ip addresses, now we will move the conversation to the more exciting one, we will use frontend server to control the backend servers, here is the illustration.

One frontend server decides whether to put the visitor to the server A or server B, here i am using NginX as frontend and also NginX as backend server.

Why don’t you use another web server as the backend?

I like NginX, for me it is easy to understand NginX configuration than another web server. Before we start to configure it, install NginX on the frontend and backend servers. I’m using CentOS 5 by the way.

wget http://pkgs.serversreview.net/files/nginx-1.1.13.tar.gz tar -zxvf nginx-1.1.13.tar.gz cd nginx-1.1.13 useradd www passwd www ./configure --prefix=/usr --sbin-path=/usr/sbin/nginx --conf-path=/etc/nginx/nginx.conf --error-log-path=/var/log/nginx/error.log --pid-path=/var/run/nginx/nginx.pid --lock-path=/var/lock/nginx.lock --http-log-path=/var/log/nginx/access.log --http-client-body-temp-path=/var/tmp/nginx/client/ --http-proxy-temp-path=/var/tmp/nginx/proxy/ --http-fastcgi-temp-path=/var/tmp/nginx/fcgi/ --user=www --group=www --with-http_ssl_module --with-http_flv_module --with-http_mp4_module --with-http_gzip_static_module --with-http_realip_module --with-http_addition_module --with-http_xslt_module --with-http_image_filter_module --with-http_geoip_module --with-http_sub_module --with-http_dav_module --with-http_flv_module --with-http_random_index_module --with-http_secure_link_module --with-http_degradation_module --with-http_stub_status_module --with-http_perl_module --with-mail --with-file-aio --with-mail_ssl_module --with-ipv6 make make install

in the configurations above, as usual i am using “www” user and group for NginX. Next download NginX init script and make it executable.

wget -O /etc/rc.d/init.d/nginx http://pkgs.serversreview.net/txt/nginx chmod +x /etc/init.d/nginx chkconfig --add nginx chkconfig nginx on

Okay, NginX has been installed on the frontend and backend servers, now we configure the frontend server. Here is the very basic working NginX configuration for the frontend server.

user www www;

worker_processes 1;

events {

worker_connections 1024;

}

http {

include mime.types;

default_type application/octet-stream;

sendfile on;

keepalive_timeout 65;

gzip on;

upstream backend {

server backend_ip1:backend_port_other_than_80;

server backend_ip2:backend_port_other_than_80;

}

server {

listen 80;

server_name serversreview.net;

location / {

proxy_pass http://backend;

}

}

}

As you can see from the above, the very first configuration is “www” user and group for NginX, the second configuration is “EventsModule”, that is for how NginX deal with connections, and the configuration you need to take a look is within the “HttpCoreModule”, check the “upstream” tag. NginX HttpUpstreamModule is used for load balancing across backend servers.

upstream backend {

server backend_ip1:backend_port_other_than_80;

server backend_ip2:backend_port_other_than_80;

}

set your backend server ip 1 and 2, also set the port other than 80, for instance 8080. And then you call the backend variable with

location / {

proxy_pass http://backend;

}

another directive for upstream module is “weight”, this can be used if you want to be more specific about weight request of your backend servers, for instance you want the first backend server serves more requests than the second backend server, than you can set

server backend_ip1:backend_port_other_than_80 weight=5; server backend_ip2:backend_port_other_than_80;

those configuration above means 5:1, five from six request will be sent to the first backend server and another one will be sent to the second backend server.

more directives for failover is “max_fails” and “fail_timeout”

server backend_ip1:backend_port_other_than_80 max_fails=3 fail_timeout=30s; server backend_ip2:backend_port_other_than_80;

the request will be sent to the first backend server for 3 tries at 30 seconds timeout before sent to the second backend server.

Okay we have finished the basic settings for the frontend server, now for the backend server, the configuration is as same as the usual NginX virtualhost setting. First we create the folder for domain, public_html, and logs under “www” user.

mkdir /home/www/serversreview.net/public_html /home/www/serversreview.net/logs

And this below is the basic setting for the backend server.

user www www;

worker_processes 1;

events {

worker_connections 1024;

}

http {

include mime.types;

default_type application/octet-stream;

sendfile on;

keepalive_timeout 65;

gzip on;

server {

listen 8080;

server_name serversreview.net;

access_log /home/www/serversreview.net/logs/access.log;

error_log /home/www/serversreview.net/logs/error.log;

location / {

root /home/www/serversreview.net/public_html/;

index index.html index.htm index.php;

}

}

}

check the server tag inside HttpCoreModule

- “listen 8080;” so i am using port 8080 for backend server, set port 8080 also in the upstream directive at you frontend server.

- “root /home/www/serversreview.net/public_html/;”, this is the location of my public_html directory, under domain folder and www user.

After add or edit nginx.conf, do not forget to restart your NginX

/etc/init.d/nginx restart

Okay your configuration for backend and frontend is finished, now test whether if the configuration works or not, write one html file inside “/home/www/serversreview.net/public_html” and try to access it via browser, if your html file appears in your browser then success!

Yeah, yippie, yippie!!!

Wait, there are a few configuration’s that you can use to optimize your virtualhost setting:

- First, you may not want if your visitor accessing your site via ip address from the backend server, e.g. your site should be accessed is via domain: https://serversreview.net:80 (frontend), but your visitor can access your site from http://212.121.212.121:8080 (backend). Then you need to add the following directives within your “location” tag of your backend server

allow your_frontend_server_ip_address; deny all;

so if your visitor accessing your site via backend server, then they will meet your 403 error page. That’s good but i think it is still not very good to have that 403 error page appear from accessing the ip address. How about we redirect 403 to your domain? So if someone accessing your backend ip, it will be redirected to your main site, add the following directive inside your “server” tag

error_page 403 https://serversreview.net;

where https://serversreview.net is your frontend / domain.

- Second, if you want to add more than one domain, you can just add another virtualhost configuration in the frontend and backend just like the above, but you need to add “proxy_set_header Host $http_host;” directive to each of your frontend’s virtualhost

location / { proxy_set_header Host $http_host; proxy_pass http://backend; }That will make NginX read your request-header “Host” (domain, not the ip), if you don’t, then each request of your domain will be sent to your first virtualhost in your backend server.

Is that all? Yeah that’s all for the second part,lets go to the more exciting section part 3 here.